Designing a fraud detection platform that stays ahead of fraudsters requires more than reactive, sticky type and patchwork solutions. It demands a modular, purpose-built architecture rooted in AI and continuous intelligence.

Lab vs. Production – Where Intelligence is Created and Deployed

The Unified Fraud Detection Platform (UFDP) operates across two tightly coupled tiers with distinct roles:

- Model Development Tier (Lab) is where intelligence is created, agents are designed, trained, and iteratively tested. This includes crafting the Agentic AI framework, defining decisioning logic, building context-awareness, and simulating perception/reflection loops. Tools like LangChain, LlamaIndex, and GenAI libraries are used extensively here.

- Production Execution Tier is where this intelligence is put into action—fraud signals are received via API calls from external functional module into the framework, agents are orchestrated to detect fraud in real time, integrated into enterprise workflows, and continuously improved through live feedback and telemetry.

The Production Execution Tier doesn’t ingest or transform raw data. Instead, it receives structured runtime signals—like login attempts, payment triggers, or transaction metadata—via secure APIs from enterprise systems. These inputs are evaluated by pre-trained agents and orchestrators promoted from the Lab environment. This clean separation ensures UFDP transitions from experimentation to execution in a scalable, secure, and intelligence-driven manner.

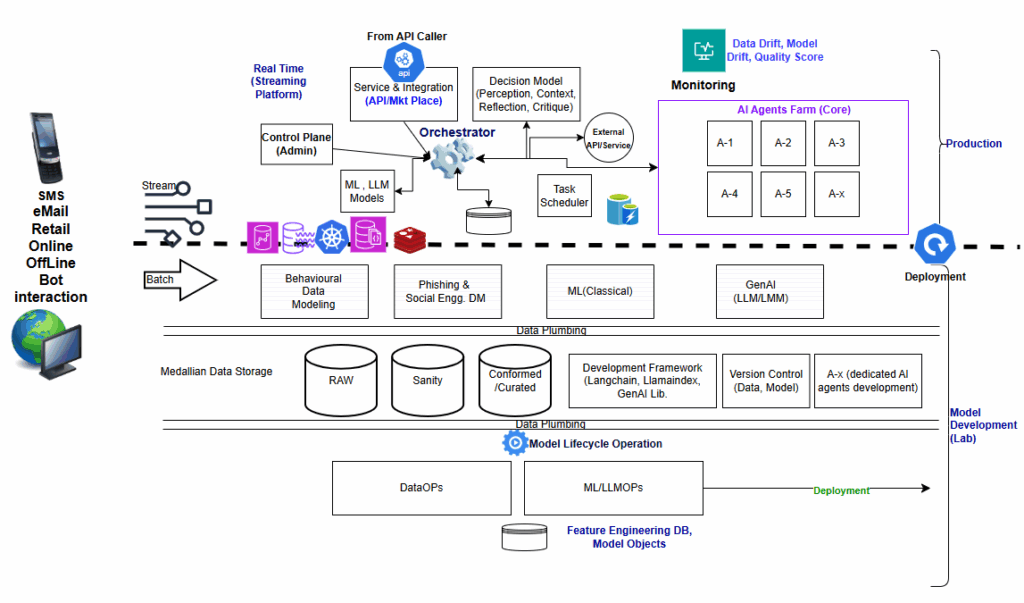

The architecture diagram below brings this design to life.

The Architectural Blueprint

The UFDP architecture is built across two tiers:

- Model Development Tier (Lab): This is the intelligence creation layer—where ideas are explored, agents are trained, and behavior is simulated before any code reaches production. Key steps and components include:

- Data Ingestion & Transformation: Ingests raw data (both batch and streaming) from behavioral, transactional, device, and identity systems. Cleansing, wrangling, and transformation prepare it for downstream use.

- Feature Engineering & Cataloging: Extracts structured signals (features), applies sensitivity tagging, and catalogs them for versioning and reuse.

- Behavioral & Process Modeling: Builds models to detect user anomalies, phishing patterns, interaction abuse, and system compromise.

- ML and GenAI Pipelines: Develops both classical ML scoring models and GenAI reasoning flows for rule generation, contextual understanding, and decision explanation.

- Agentic AI Prototyping: Designs fraud-specific agents (e.g., A1, A2, A3….Ax) using perception-reflection loops and goal-driven behavior.

- Orchestration Simulation: Models how agents interact and respond to real-time events using Agentic Orchestrator (e.g., MCP protocols).

- LangChain and LlamaIndex are employed in tandem to build intelligent fraud detection workflows—LangChain helps stitch together reasoning steps, orchestrate API or microservice calls, and manage agent interactions, while LlamaIndex structures knowledge (from documents, databases, or logs) for efficient context retrieval and semantic grounding during agent simulation and training.

- MLOps, LLMOps, and DataOps work in tandem to manage the entire lifecycle—from ingesting and preparing data, engineering features, versioning models and prompts, to deploying agents and ensuring auditability. While MLOps governs model training, performance, and retraining, LLMOps manages prompt integrity and semantic consistency, and DataOps ensures data quality, lineage, and contract enforcement—creating a robust feedback loop between Lab and Production.

- Version Control & Promotion: All agents, models, and orchestration components are version-controlled and promoted to the production tier through gated releases.

- Production Execution Tier: This is the live decision environment—where intelligence meets execution in real time. Here, the Unified Fraud Detection Platform (UFDP) operates as a service, continuously evaluating real-world events for signs of fraud.

-

- Caller systems (e.g., digital banking apps, payment platforms, onboarding systems) trigger an API call to the UFDP service when a transaction or user action needs real-time evaluation.

- The Agentic AI Orchestrator receives this call and activates the relevant pre-deployed agents (e.g., A1 to Ax) based on the input context, be it login behavior, transaction metadata, or device fingerprint.

- Each agent performs a targeted evaluation, such as checking for behavioral drift, social engineering signals, transaction structuring patterns, or synthetic identity artifacts.

- The orchestrator aggregates outputs into a unified risk score, which is returned to the calling system in milliseconds—along with decision recommendations (approve, hold, escalate).

- Every evaluated interaction is logged for auditability, feeding telemetry data back to the Lab for continuous learning and tuning.

This setup keeps production lean and secure—processing only curated signals, avoiding raw data handling, and maintaining strict boundaries from development environments. It ensures UFDP is always on, adaptive, and continuously improving.

Technology Stack Overview

- Agentic AI: Modular, autonomous detection agents built for specific categories

- ML (Classical): For statistical scoring and anomaly flagging

- LLM/GenAI: Used for semantic analysis, summarization of fraud patterns, rule suggestion, and explanation generation

- LangChain & LlamaIndex (Lab): Enable context assembly, agent chaining, LLM and knowledge integration

- MLOps & LLMOps: Responsible for model development lifecycle with deployment, feedback capture, auto-retraining

- DataOps: Manages catalog, lineage, PII/SII sensitivity (deanonymization sensitive data), and integration governance

Interplay Between Sandbox and Production

- Models and logic developed in sandbox are versioned and promoted to production after validation

- Agent behavior and orchestration patterns are refined via simulation before live deployment

- Production environment ensures low-latency detection and high-throughput streaming capacity

Mapping to Fraud Categories (Please refer Part 1 for fraud categories)

- Behavioral Anomalies → Streaming detection using feature pipelines + ML scoring + real-time signal correlation

Example: A user typically accessing their account from Dallas suddenly logs in from Vietnam at 2:45 a.m. and initiates a $75,000 wire transfer to a new recipient. - Rule Violations → Predefined thresholds and dynamic rule generation using GenAI

Example: An account makes multiple cash deposits of $9,900—just below the $10,000 Currency Transaction Report (CTR) threshold—to avoid automated reporting to regulators (a form of structuring). - Process Manipulation / Social Engineering → LLM-powered detection of phishing phrases, response pattern shifts, device fingerprinting

Example: A fraudster impersonates a bank’s fraud department, convinces the customer to share OTPs, and initiates Zelle transfers from the victim’s account. - System / Identity Compromise → Credential breach pattern recognition, API misuse tracing, authentication bypass detection

Example: Stolen credentials from a recent data breach are used to attempt mass logins across multiple banking systems, leading to unauthorized account access through credential stuffing attacks.

Closing Thoughts: Evolving with Purpose

Architecting UFDP is about more than integration—it’s about orchestration, adaptability, and intelligence. Your systems shouldn’t just detect fraud—they should predict, explain, and evolve. In Part 3 (Closing), we’ll explore how to operationalize this architecture with real-world practices—focusing on environment setup, continuous feedback, performance tuning, and co-innovation strategy to make UFDP a living, learning defense platform.

Stay tuned!