Back up process has been in our IT ecosystem for ages. Over the period of time people has been using “backup process” as a term interchangeably for synchronization, data transfer, copying data files and also while developing HA (High Availability) and DR solution. I will be focusing on back up process as an integral part of Infrastructure Management activity in this article while giving contrast with DR (Disaster Recovery), Synchronization, copying and similar processes while highlighting latest trend and solution around the same.

As industry is getting more dynamic, digital and fast, data has to be made available everywhere in quickest fashion. Delivery channel is exploding into e-commerce, m-commerce, social media, kiosks and other digital channels. Operational application such as ERP, CRM, SCM etc. and many other receives user input, processes & enriches the same with other data stream duly fed by other enterprises or processes and primarily store locally/one location in tables or files but the same processed or un processed data has to be spread out to multi locations to make 24X7 delivery through multi locations and multi delivery channels possible. CDN (Content Delivery Network) is one of the best solutions to spread volume of data to end users in a most efficient and convenient manner, CDN services are primarily built around the data copy and synchronous process. There are service providers and well as private CDN players such as Amazon.

Protection of Virtual Environment: While the core of the data center is certainly important, much of that core is now moving to the virtualized environment. For a variety of reasons these environments tend to be on their own storage systems but the data protection process should neither treat these systems like a bunch of standalone physical servers, nor should the data protection process be managed separate from the rest of the data protection processes in the environment. An important requirement for any virtual environment backup discussion is how the data protection process will deal with the dynamic nature that it brings. Since creation of a virtual server is much easier and less costly than provisioning a physical one, virtual machines can appear on the network and start running production applications without the backup manager ever knowing about them. The backup process needs to automatically detect virtual machines when created and continue to protect them when they move.

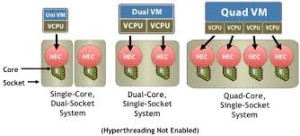

VM Density (HOST VM ratio): Another important trend in the virtual environment is the increasing density of virtual machines. Density is being enabled by the generational improvement in processing power being provided by x86 servers. The denser the VM to host ration the better the Virtual Server ROI becomes. A key roadblock when trying to increase VM density is data protection. Off-VM protection and high levels of automation when implemented correctly will remove these roadblocks.

VM Density (HOST VM ratio): Another important trend in the virtual environment is the increasing density of virtual machines. Density is being enabled by the generational improvement in processing power being provided by x86 servers. The denser the VM to host ration the better the Virtual Server ROI becomes. A key roadblock when trying to increase VM density is data protection. Off-VM protection and high levels of automation when implemented correctly will remove these roadblocks.

As virtual environments move from a test phase to “virtual first” initiatives the quantity of virtual machines and the storage capacities that they consume are increasing. For these reasons ‘off-virtual machine’ backup is a necessity. The VMs need to be protected without placing an undue load on each and every one of them. Off-VM backup also eases the management burden by allowing the focus on backing up at the hypervisor layer, not each individual virtual machine.

Different approaches: (HA vs Server Clustering) : Let’s take some insight about basic differences amongst HA (High Availability), Server Clustering, Recovery solution etc. from backup and copying standpoint. In the virtual environment (VM ecosystem) HA is the process to ensure higher availability of system by continuously recovering VMs on failed hosts. This process monitors and detects any host issues (Failure) and automatically restarts VMs on another host. Server clustering is relatively costlier affair. This concerns building redundancy for all resources and components in any server establishment including compute, network, bandwidth, memory etc. In most HA installation (viz. vCenter Server in vmWare set up maintains heartbeats amongst all hosts running hypervisors such as ESX/ESXi, the moment there is any glitch in the heartbeat, impacted VMs get restarted in other host). In order to recover the state of process, a continuous backup or copying process must have to be always on.

Different approaches: (HA vs Server Clustering) : Let’s take some insight about basic differences amongst HA (High Availability), Server Clustering, Recovery solution etc. from backup and copying standpoint. In the virtual environment (VM ecosystem) HA is the process to ensure higher availability of system by continuously recovering VMs on failed hosts. This process monitors and detects any host issues (Failure) and automatically restarts VMs on another host. Server clustering is relatively costlier affair. This concerns building redundancy for all resources and components in any server establishment including compute, network, bandwidth, memory etc. In most HA installation (viz. vCenter Server in vmWare set up maintains heartbeats amongst all hosts running hypervisors such as ESX/ESXi, the moment there is any glitch in the heartbeat, impacted VMs get restarted in other host). In order to recover the state of process, a continuous backup or copying process must have to be always on.

There are certain legacy environment such as AS400, And enterprises usually schedule daily, weekly, quarterly, semi-annually and annually back up activities that ensure that data snapshot is maintained at different frequency for different applications/Jobs so that IT staff can recover and reinstate the data status at a particular date/period or carry out data analysis to address any business bug. Retention period dictates the frequency of backup, such as Daily can be retained for a week, monthly can be for a year, annual could be for 5-6 years as case may be. This solves storage/media issues. However, such back up process is very human intense and prone to human error and MUST be replaced by modern backup process such as BRMS (Backup recovery management system)

Key Drivers and impact: BYOD is becoming culture in the professional world and is catching up quite fast in rest of the corporations; users are becoming more mobile than ever and now carry more unique copies of corporate data than in the past. It is important that these mobile data sets are backed up. The backup manager can no longer wait until the user returns to home base for a backup to occur. Data has to be protected while they’re on the move. This means dealing with sub-par bandwidth common in broadband or hotel WiFi. In the case with back office application access, users want to get at their data from any type of device they have at hand, either a smartphone or tablet. If IT does not address this core need, users will be left to find their own solutions. The result can be the introduction of the public cloud into the organization, which can jeopardize corporate assets and make the overall data protection process even more complicated. IT is better served by providing this service to their users and the backup process is an ideal way to accomplish that task.

Also branch offices need backup process automated and streamlined than being served at the mercy of back up staff availability. I think backup process is improving and is getting scheduled and automated; frequency is defined as per RPO and RTO requirement. Volume, importance and impact of data in branch or local offices are much vibrant, widespread and intense so backup process require bit more focus as compared to other locations. Solution could be in line of keeping vault at local for storing data while replicating the same in main data center.

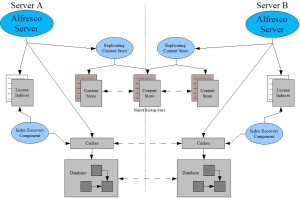

DR and BCP: Server and desktop consolidation lowers operational costs but puts threat from “all your eggs in one basket” scenario. Consolidation and further virtualization al so bring the expectation from users that IT is much more responsible for data at these remote offices, branch offices. This means that making sure data is available from a disaster recovery perspective is that much more important. While HA and cluster processes protect the applications that can’t afford down time, the bulk of the servers (virtual and physical) will have to be recovered. Making sure that data is in place and accessible, is an important requirement for modern enterprise applications. This includes leveraging deduplication to replicate hot backup data to the DR data center and even leveraging tape for cold, off-site data movement to protect against events that HA doesn’t cover such as corruption, terrorism, site wide disaster, etc. Cloud could be an effective solution for DR process for at least SME (Small & Medium Enterprises). Full capacity does not have to be purchased up front since the cloud scales granularly as data grows internally. It also means that every primary storage system upgrade doesn’t have to result in a 2X storage capacity purchase for the backup process (capacity for the on premise copy and the off-site copy)

so bring the expectation from users that IT is much more responsible for data at these remote offices, branch offices. This means that making sure data is available from a disaster recovery perspective is that much more important. While HA and cluster processes protect the applications that can’t afford down time, the bulk of the servers (virtual and physical) will have to be recovered. Making sure that data is in place and accessible, is an important requirement for modern enterprise applications. This includes leveraging deduplication to replicate hot backup data to the DR data center and even leveraging tape for cold, off-site data movement to protect against events that HA doesn’t cover such as corruption, terrorism, site wide disaster, etc. Cloud could be an effective solution for DR process for at least SME (Small & Medium Enterprises). Full capacity does not have to be purchased up front since the cloud scales granularly as data grows internally. It also means that every primary storage system upgrade doesn’t have to result in a 2X storage capacity purchase for the backup process (capacity for the on premise copy and the off-site copy)

Summary: HA, DRP, BCP, Load Balancing, Virtualization, Failover etc. are crucial elements in modern data center and these services are even more critical in the midst of digital, ecommerce, and fast & agile business expansion. Health of these services is mostly dependent upon how elegant and modern backup process is enabled in the IT environment. Both desktop and server virtualization, as well as the continued increase in back office applications, have made data protection at the primary data center more critical than ever. Due to this consolidation of server and desktop data, the importance of protecting data at the central site is more important than ever. Corporate backup process needs to evolve from a simple insurance policy to a valuable business asset for the enterprise. The backup process, when properly implemented, has one advantage over every other process; it has all the data in the company, whether that data is in the primary data center, a branch office or on remote laptops. With this consolidation, backup is the ideal point from which data analysis, mining, behavior, trending etc, can be derived and also could be leveraged for compliance, Risk & legal services. All that’s needed is the right overlay of indexing and search capabilities and every data object, regardless of location, can be accessed for these functions. I hope business and IT establishment maintain backup process as mission critical activity in their respective IT ecosystem.